What We Need to Know About EdTech, Data, and Privacy

An FAQ about why EdTech companies need, want, and collect your child's data and what you can do about it

Note from Emily: This essay was originally posted on my website in April 2025. I have lightly edited it to reflect changes over the past year and to add new information. One important note on terminology: I do not call them EdTech “tools” because if the business model of the curricula or app or diagnostic “tool” being used is one that profits from data (and 96% do), then it is not a tool, it is a product whose determinants for success are fundamentally out of alignment with healthy child development.

EdTech, like Big Tech, is an industry.

EdTech, like Big Tech, is in this for profit, not children.

EdTech is just Big Tech in a sweater vest.

The question I hear all the time—

When it comes to data and privacy issues around EdTech, I am regularly asked: “So what? Why does it matter? Why should we care?”

The very short and very important initial response to this question is because the erosion of privacy rights is the first step in the erosion of other rights. EVEN IF we as adults are knowingly consenting to all the data collection going on in our digital lives (and trust me, we do not have a full grasp on that reality), children are minors who cannot legally consent and who often have no idea that their data is being collected, shared, sold, or used in ways that benefit corporations, not families or schools.

That response alone should be reason enough to care. But if you want to learn more, here are some common questions that will provide more information:

Q: How are children using “EdTech” products at school?

A: “EdTech” or “educational technology” can mean anything from 1:1 district-issued Chromebooks, online curricula, games or apps used for “learning,” “learning management systems” (like Schoology), online grading portals, diagnostic and assessment products (like iReady), digital surveillance or “safety” products (like Securly or GoGuardian), online mental health assessments, online state testing, and more.

Broadly speaking, the digital products children use at or for school are owned by any number of technology companies, not the schools themselves. It’s also not just a few platforms or products per school; it’s hundreds or even thousands. Per district. There is often a wide range in how different schools, grades, subjects, and teachers use such products. Some teachers use it at the bare minimum; other classes are using them every period, every day. Some districts mandate use of products like iReady, whether teachers like them or not. With other products, even within the same grade or same subject at the same school, usage rates can vary wildly.

Q: What should I worry about as a parent when it comes to EdTech products?

A: This is deserving of its own essay (and I’ve written many on this publication), but there are (at least) two big concerns:

First, such products rarely, if ever, are better than an analog or teacher-based approach when it comes to efficacy (in other words, “Does this product work?”) See the UNESCO GEM Report for more on that. It is also difficult to find independently-funded research on EdTech– most is funded by the companies who benefit from the use of such products. Internet Safety Labs is one good source for independent research on EdTech platforms and tools. Obviously, Dr. Jared Cooney Horvath is another. Or read any number of excellent essays about iReady as it not only siphons data but erodes and impedes actual learning: here and here and here are a few good examples.

But secondly, and arguably more importantly, even if these products and tools were “beneficial” to learning and teaching (and how to measure “benefit” and “success” is in and of itself a debate), nearly all these EdTech companies collect data about the children using the products and share or sell it to third parties for profit. In fact, Internet Safety Labs found that 96% of EdTech tools share children’s personal information with third parties.

Q: Wait. Why would an EdTech company want to collect my child’s data?

A: In the digital age gold rush, data is gold. This is how “free” social media apps work– we aren’t paying to use the service, we are giving Meta and Snap information about us as consumers that they collect and repackage to target us with advertisements (you’ve probably noticed how an innocent Amazon search on your phone ends up in similarly-themed ads in your Instagram feed.)

The same thing is happening with EdTech products. Because the apps, digital curricula, and learning management systems used by children at or for school are owned by for-profit technology companies, “time on device” is the measure by which such companies determine product success. These are private companies with shareholders who want to see profits (many are connected to venture capital, like PowerSchool, which is owned by Bain Capital.) The profits come from licensing agreements with school districts (often in the millions of dollars) and from the data they collect about their customers– in this case, our children. Sometimes districts are offered a low-cost or free first-year license by EdTech company salespeople, only to find that rates start to climb in subsequent, but after schools are already deeply enmeshed in the product and extrication will be messy and complicated. (One large school district IT director I spoke to expressed surprise that the rates on the district’s licensing costs were rising 3-5% a year. Of course, making up those costs mean cuts are going to have to come from somewhere else.)

Children’s data is also extremely valuable. The United States Department of Education found that one student data record is worth between $250 and $350 on the black market. There are nearly 50 million school-aged children in the United States, which is why the EdTech industry is a huge and lucrative market, projected to be worth over $800 billion in the next decade (aggregate amount of spending over hardware, software, and cloud services globally.) Today, some estimates place its value at $205 billion.

If you take 50 million children and multiple that by ~$300 per student record, that totals $15 billion dollars worth of data. It’s no wonder hackers find children’s data such an appealing target.

Q: What kind of data do EdTech companies collect?

A: Here is a non-exhaustive list of what EdTech companies may collect about our children, from one sample privacy policy:

Enrollment data

Course schedule data

Student identifiers

Academic program membership

Extracurricular program membership

Transcript data

Student-submitted materials within a course

Student grades

Student assessments

Student address

Student email address

Phone numbers

Student name

Student date of birth

Parent or guardian name

Student conduct data

Student behavior data

Student social-emotional learning indicators and inputs

Student evaluation and management data

Physical and mental disabilities

Immunization records

Treatment providers

Allergies

Email delivery preferences

Communications with stakeholders, such as parents

Usage data

Browser data

Device-specific data, including:

Data security

Cloud hosting

Information technology

Customer support

Usage and analytics

Application performance monitoring

User identity

User authentication

Feature integration with third-party products or companies

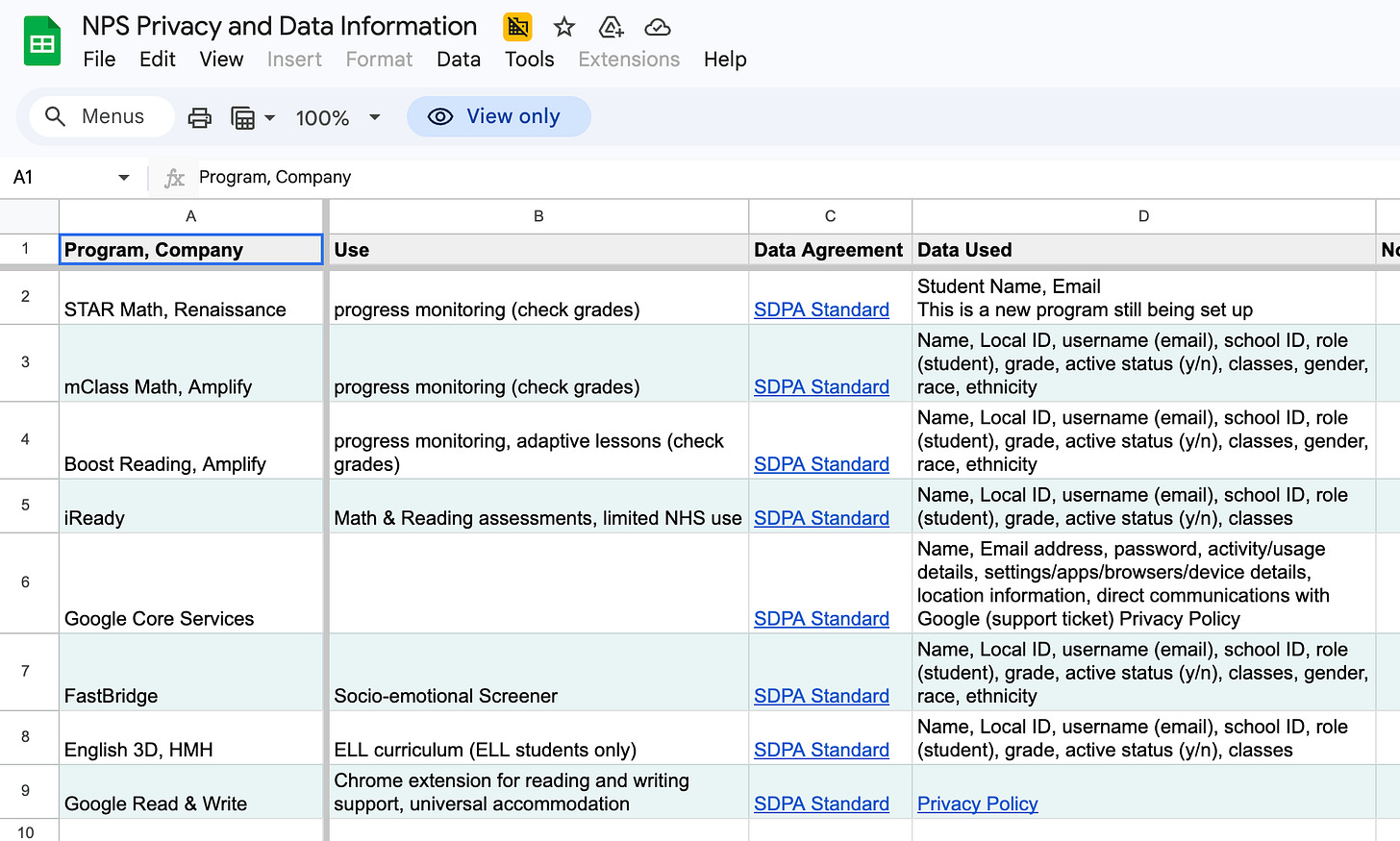

At a bare minimum, districts should publicly post on their website a list of all EdTech products used and link to their unique privacy polices. Northampton Public Schools in Massachusetts is one rare example of a district that does this (if you know of more, please let me know). They also provide access to a spreadsheet that show apps and data agreements by grade level, but the person who shared it with me notes that it is in need of updating. Even if your child’s district doesn’t use same apps or products from this list, these links are extremely interesting to look at. The fact that the list of “approved” apps alone numbers 373 unique products is shocking.

Q: But isn’t all of this personal information anonymous?

A: Unfortunately, no. Schools may tell parents data it is, but that is because they are told by EdTech companies that the data is “anonymized.” Given how much data is collected, however, it is not difficult to reverse-engineer data to identify students individually. There are even companies who perform “identity-resolution” — taking anonymized data collected about users, re-identifying that data, and selling it.

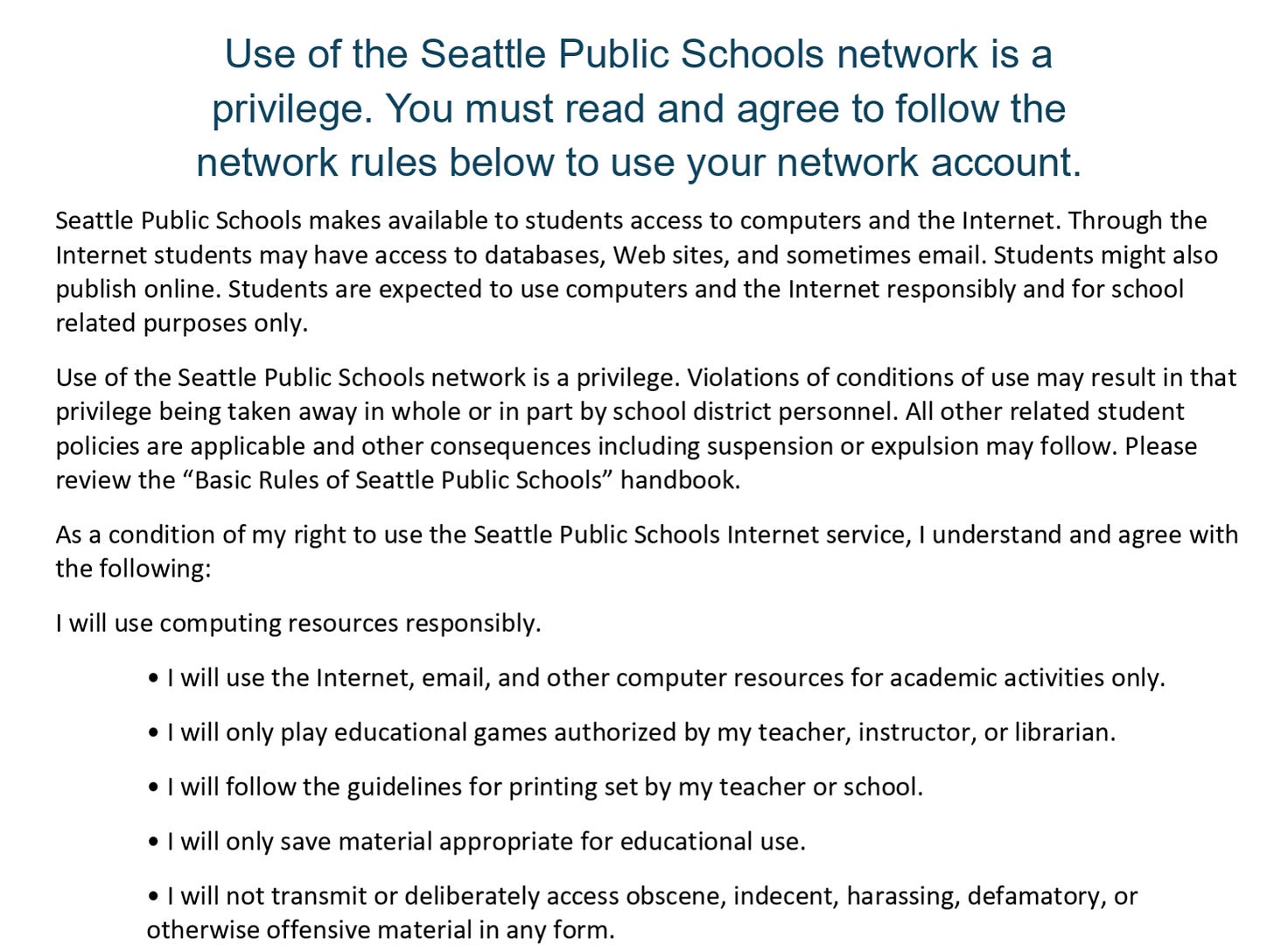

Q: I know I signed a User Agreement or agreed to some Terms and Conditions when the school gave my child a laptop. If I checked that box or signed that agreement, doesn’t that mean I consented to all of this?

A: No. First, many of those “User Agreements” that schools require parents to sign at the start of the school year say nothing about the school’s responsibility to protect student data and focus almost entirely on “student use” of the school-issued EdTech products (“Students will use it responsibly/for educational purposes only”) and that it is a family’s responsibility to replace damaged or lost laptops. If the document doesn’t say anything about what happens when your child is harmed on a school-issued device, then the “agreement” is not there to protect your child; it’s there to (ostensibly) protect the school from liability if or when harm occurs (and it does, often).

It is extremely unlikely you have been fully informed of potential risks to your child’s information being collected or about the various ways in which these companies might use your child’s data, and you certainly did not receive detailed specifics about each individual app or product (which can, as I note above, run into the hundreds). Most schools are not even fully informed of the risks, and that’s not surprising given that there are only a handful of IT administrators responsible for overseeing hundreds of unique products. And those are just the ones that are vetted through proper channels. Many EdTech salespeople approach teachers and principals directly, bypassing any public curriculum adoption process or formal vetting process.1

EdTech companies would like parents to think that they have consented; but legally-speaking, these blanket “User Agreements” are not “informed consent” and would not hold up in court. If you have any concerns, please contact Andrew and Julie Liddell of the EdTech Law Center. They are a consumer protection firm that will speak to you free of charge to help you understand your rights.2

Q: What are some consequences of these companies having my child’s data? How do they use this data in ways that might harm children?

A: The erosion of privacy rights is dangerous because worse civil rights violations can follow. Aside from the fact that a vast trove of your child’s personal information is now on the internet, there are numerous ways this can harm a child.

Children using school-issued devices to do web searches about controversial topics, even if simply researching them for a school assignment, can flag a student as interested in extremist behavior, conspiracy theories, and more.

The information students search, send, or type on digital platforms can later be used against them in college admissions or job applications.

Law enforcement agencies have used student data to flag “at-risk” children. There are huge implications for police departments having access to this type of data. As one example, law enforcement agencies can use student data behavioral records to predict future criminal behavior.

The data collected about children can also be used to deliver targeted ads that can contain dangerous messaging about self-harm, health misinformation, or extremist behaviors.

Data breaches are also common. Powerschool, Inc., a $5 billion EdTech company (owned by Bain Capital), was the recent target of a massive data breach in which over forty years of data on over 70 million people were leaked. More recently, Instructure, parent company of Canvas, used by 8,000 institutions, according to their website, were targets of a student data breach.

EdTech companies also market their “cradle to career” unified data systems as tools for state and local governments to use to support workforce planning and juvenile justice and law enforcement.

AI models are trained on data. When teachers and students use AI products in education, they are helping train datasets.

Q: My school is telling me they can consent on my behalf. Is this true?

A: No, but they may not know this.

An amicus brief filed by the FTC in September of 2025 is the FTC’s most definitive statement yet on what COPPA (the Children’s Online Privacy Protection Act) does and does not allow, and the brief has enormous implications for schools and EdTech companies. Until this filing, many EdTech companies sought permission only from schools–and not from parents, as COPPA requires–as to what information is collected about students and how that information may be commercialized. However, this was based on, at best, a misunderstanding, and at worst, a willful misreading of the law. In a nutshell, this amicus brief establishes that schools can no longer stand in the shoes of parents for the purpose of consenting to data collection.

I wrote a longer piece about this amicus brief here, and created a letter template for parents to use to send to their schools to inform them about this.

Q: So what can parents do?

A: The first thing parents can do is understand that this is a problem. Many schools and parents are just now coming around to the fact that student personal devices (like phones and smartwatches) should not be available to students during class time. This is absolutely the right thing to do in terms of what is best for children and learning.

However, if all personal phones and watches are banned, but schools still provide internet-connected iPads or Chromebooks to students, students are still engaging with harmful, manipulative, and addictive tools and platforms whose business model is entirely dependent on “time on device” and “user engagement.” Children are clever– they may no longer have their smartphones, but they can still chat within Google docs, watch TikTok videos on Pinterest, download VPNs to bypass school filters, and watch YouTube videos (often unblocked and available to students in the Google Classroom suite of products– Google owns YouTube.)

The second thing parents can do is to share this information with other parents. Print off or email this FAQ to your parenting peers. There is a clear need for collective action with pushing back on EdTech, and while these tools and products are harmful to learning and teaching, the privacy and data security issues are far more impactful and far less understood.

Q: So it’s not just about whether or not these EdTech products “work”? I should also be concerned about data and privacy? Anything else?

A: There are four questions we must insist schools answer before providing children access to EdTech products:

Is this effective?

Is this safe?

Is this legal?

Is this superior to a human teacher?

Until and unless the answer to all four questions must be a resounding Yes. Currently, it is a terrifying No.

Q: Can I refuse? Opt out of EdTech?

A: Short answer, yes. Is it always easy to do this? No. Schools will say that it is not possible, that children will fall behind, that they cannot accommodate, that you cannot opt out of curriculum, etc. Schools saying that it is not allowed or not possible to refuse or opt out are essentially asking families to choose between their child’s education or protecting their child’s privacy and data. You may have to reach out to the EdTech Law Center for support.

Q: How do I opt out?

A: I have created an UnPlug EdTech Toolkit that contains much of the above information, plus a series of letter templates and resources on Substack that will support you in this process. I also recently recorded an in-depth webinar about how parents can opt out, including different opt out pathways and how to continue to support teachers, as opting out disproportionately burdens them. You can view that webinar here.

Q: Have you (Emily) opted out of EdTech? How is that going?

A: Yes. My daughter is an 8th grader this year, and this is the second year she has declined the 1:1 Chromebook. We are fortunate to have the support of an excellent principal. We started the conversation with her the spring before 7th grade started and focused on building a good working relationship with school leadership. When my daughter does need to use a computer for typing an essay, for example, her English teacher has a spare one she can use. We have also opted her out of all standardized tests and online mental health assessments. (I go through all of this in the webinar).

This is far from ideal, however. We realize she still has an online presence in the learning management system, some of her assigned curriculum is still all-digital and has resulted in her using the loaner computer more than I would like, and we recently learned that the district is advising writing teachers to use AI to assess student papers.3 She will be entering high school in the fall and I have already communicated with the school counselor there about our plans to decline the 1:1. We received a supportive message back.

It should be noted that my daughter is thriving without the 1:1. She has very good grades, she is an active class participant, and she seems mostly unbothered by the fact that she is the only student without a laptop.

Concluding Thoughts:

I am not anti-technology; I am tech-intentional. I see a desperate need for technology education in schools (and at home), to teach children skills like typing (shockingly, many students with 1:1s don’t know how to type); media literacy; the ability to identify mis- and dis-information; to understand what AI is and why it is still so risky.

But “EdTech” is not “Tech Ed.” What is currently happening in K-12 schools is mostly EdTech (or the “Tech Ed” provided is curricula delivered by the same companies who benefit from kids’ using it or which take a foregone conclusion approach— “Kids will be online so we should teach them to use social media safer.” I reject this premise— there is no such thing as a “safe cigarette”).

We have a lot of work to do, but I believe that the future of schools is tech-intentional, and there are things we can do to get there. In fact, I have an entire talk on this very topic, which I delivered in Louisville, KY, at the Filson Historical Society in March 2025. You can view the talk here. More recently, I delivered another keynote for the Tikah Marom conference in Miami in March of 2026 called The Pen(cil) is Mightier Than the Sword: The Case for the Analog Classroom.4

For more on this topic, also be sure to check out these essays I’ve written:

I Refused the School-Issued Chromebook for My Middle-Schooler. You Can Too. Here’s How.

Ten Questions to Ask Your School Leaders About EdTech Products

The Hard Truth About EdTech: What Administrators, Superintendents, and Principals Need to Know

If EdTech Doesn’t Educate, What Does It Do? 16 Ways EdTech is Harmful to Children

Toppling the Tower: 5 Frustrations About EdTech, AI, and Education, and 3 Questions to Ask Instead

As one example, I had no idea that Seattle Schools, my home district, used iReady, because I couldn’t find anything on the school website about the contract or adoption of the product. But when I asked in a Seattle Schools Facebook group about it, parents and teachers from at least four different schools in the district said they use it. I have no idea how it came to be used in Seattle Schools.

They recently told me they are averaging 100 inquiries per week right now. They only opened two years ago.

When I followed up with the district about this, they stated that “teachers are not being instructed to use AI to assess student writing.” It was my daughter’s teacher who conveyed to me the district instruction to use AI. Make of that what you will.

It was this essay in which I ventured a hope that we could protect childhood a bit more by returning to pencils and paper in classrooms that led to me receiving my first death threat on this very platform. (I did report it to Substack and to law enforcement).